Ace quick missions & earn crypto rewards while gaining real-world Web3 skills. Participate Now! 🔥

Data engineering is one of the most lucrative career options in the STEM field. There is a high demand for all-rounded, qualified experts – and the Python-based DataCamp Data Engineer career track can help you become one of them. So, saddle in – today, we’ll be making our way through every course on this career track.

Of course, before we get started with this DataCamp Data Engineer review, we must first figure out what this role means and how it differs from data scientists. You’ll find out what makes data engineering such an in-demand career choice. And, of course, you’ll see exactly how DataCamp can help you get started.

You might’ve already figured it out – the DataCamp Data Engineer with Python career track will act as your stepping stones into this industry. We’ll be looking at the entire track – every course you’ll be working on as part of your training. In over 70 hours, you’ll be able to go from a junior Python programmer to a market-ready data engineer.

So, let’s begin your new career journey.

Table of Contents

Why Should You Choose the DataCamp Data Engineer Path?

Before we dive into the DataCamp Data Engineer review, let’s first establish what this career track ensues and what skills you need to carve your place in the industry. First, we’ll take a look at what data engineers do and why this field is so lucrative.

Latest Deal Active Right Now:Take advantage of this special Udacity coupon code & access selected Udacity courses for free! Learn new skills & develop your career at zero cost.

You might be tempted to use the terms “data engineer” and “data scientist” interchangeably. After all, both appear to originate from the same industry and are incredibly valuable roles in the tech sector. However, there are some significant differences between the two.

Data scientists work with data directly. They collect data and perform its analysis. They essentially deal with the entire flow of data and transform it into visualizations that we can understand more easily.

But to ensure that the data flows smoothly, it needs an infrastructure. Here’s where data engineers come in – they create systems that ensure data scientists can do what they need to do. If the data is the stream that needs to travel across the network, data engineers are responsible for building the pipeline.

So, data engineering is a pretty important job in the world of data science and business analytics. It requires a good understanding of data systems, knowledge of programming languages, and attention to detail.

You could say that data engineers are jacks of all trades – they have to know Python and SQL, among other languages, work with different operating systems, understand data warehouses and machine learning, and be able to work with business intelligence tools. And that’s without getting into more general skills, like creativity and attentiveness.

Having the full scope of data literacy skills and technical prowess is no small feat – according to LinkedIn’s 2020 US Emerging Jobs Report, data engineering is one of the most sought-after jobs.

So, how do you find your entry point in such a high-demand, high-skillset industry? Well, you can start your venture by following the DataCamp Data Engineer with Python career track. It’s one of the many paths offered by the platform. Career tracks are a series of courses dedicated to preparing you for your future dream job.

DataCamp courses provide you with an engaging, gamified learning opportunity. However, before you jump into the DataCamp Data Engineer path, you should already have some understanding of how Python and SQL work. We’ve covered both DataCamp Python and SQL courses that are beginner-friendly, so you won’t have to wander far.

With 73 hours of content throughout the 19 courses and 2 assessments, the DataCamp Data Engineer path will help you stay on track to becoming an all-rounded professional. Given the size of this career track, we can’t dive deep into each course. Nevertheless, here, you’ll find short descriptions of the courses.

We've grouped them into three categories: beginner, intermediate, and advanced. Of course, it’s recommended that you follow each course as they are listed in the DataCamp Data Engineer path. However, feel free to stick with some courses for a longer while – and even jump into another career or skill track, all for the same price!

Without further ado, here’s every course in the DataCamp Data Engineer career track, starting at the beginner level.

DataCamp Data Engineer Courses: Beginner

As I’ve already mentioned, knowing Python and SQL, to some extent, is a prerequisite to understanding the DataCamp Data Engineer path. So, when I say “beginner”, I mean this field specifically and not programming in general. With that out of the way, let’s take a look at the courses falling under this category.

The first category will help you hone your Python skills so that you can use this programming language efficiently. Of course, you’ll keep going back to Python throughout the DataCamp Data Engineer path, but nearly all of the first few courses are specifically Python-based.

To ensure that all different levels of budding data engineers are ready for the challenge, DataCamp has developed an easy-to-access Data Engineering for Everyone course.

For this first course, you won’t be required to put your coding skills to the test quite yet. Data engineering for Everyone is a course designed to provide you with an understanding of what data engineers do and how their work aids data scientists.

In the first chapter, you’ll learn what data engineers are exactly. You’ll learn more about the importance of this role in data science and learn the basics of creating data pipelines.

Then, you’ll learn more about data structure and how to work with it to ensure that the analysts can easily search and organize the information they need. Finally, you’ll cover data processing. This involves tasks like data manipulation and cleaning to ensure that any unnecessary data is filtered out.

As you already know, this career track presumes that you already have some Python skills. After all, the full title of the entire track is Data Engineer with Python. So, you have to prove that you already understand this programming language well enough to stay on track.

Signal is DataCamp’s unique assessment system. It’s developed to help you figure out where your knowledge might be lacking and see how you can fill in these gaps. Signal provides you with a clear, visual outline of your strengths and weaknesses.

Based on your skills, you’ll get personalized recommendations for the courses to help you improve. You can look at this as an early checkpoint – which Python skills are you good at, and what should you focus on more? Completing this assessment will help you stay on track without getting lost on your data engineering journey.

And here’s the best part – you can complete the DataCamp Signal assessments for free. So, once your Python abilities have improved, you can jump right back into the DataCamp Data Engineer path – and feel more confident in your programming abilities.

While the Data Engineering for Everyone course gave you basic insights into this job, you haven’t learned about what tools it requires yet. The Introduction to Data Engineering course is here to cover exactly that.

You’ll reinforce your knowledge of this career path and what it entails. The core part of this course is familiarizing yourself with the data engineer toolkit. First and foremost, you’ll learn about databases and how to use them. Then you’ll take a look at some of the popular processing and scheduling tools.

Your goal for this course is to get the hang of the essential data engineering workflow – Extract, Transform, and Load (ETL). You’ll get a chance to work with raw data and, as part of your tasks, you’ll complete the whole ETL process in a DataCamp-based case study.

DataCamp Data Engineer Courses: Intermediate

With the essentials covered, let’s take it up a notch. The following courses will require you to have intermediate Python and SQL knowledge to work efficiently.

Since you’re already familiar with Python, you should know about the pandas library. It will act as your primary tool in this next step in your DataCamp Data Engineer path. You will learn how to utilize pandas to extract the necessary data from different file formats.

First, you’ll work with data extraction from flat files and see how they can be modified. You’ll be introduced to strategies for handling missing data and errors. Then, you will move on to loading Excel files. Spreadsheets are a very common method of data storage, so you’ll come across such data files a lot.

You’ll also get to put your SQL skills to use by processing data from databases. Finally, you’ll get to extract data from public databases using web APIs. Throughout this course, you’ll work on several case studies which will help you solidify your new knowledge and put your skills to the test.

Data engineering requires thorough handing of the code. The systems you’ll be building will likely have to handle large loads of data. So, you have to make sure that the code you’re writing is not just as fool-proof as possible (after all, human error cannot be 100% avoided) but also efficient and provides fast execution.

In this course, you’ll learn how to save your computational resources to increase the speed and effectiveness of the code you’re executing. You’ll be working with the Python Standard Library, as well as NumPy and pandas, which will allow you to access some of the most commonly used Python tools.

You’ll see how NumPy arrays work, look for bottlenecks in your code, and apply strategies to eliminate them. You’ll also optimize your code by profiling it and try your hand at writing loop patterns.

Writing Efficient Python Code is one of our featured DataCamp Python courses, which you can learn more about here.

Your Python code will feature a lot of functions – code components responsible for completing tasks and making your code easy to maintain and navigate. Learning the best practices for writing functions will help you immensely in your data engineering projects.

In this course, you will work with docstrings to craft a code that is easy to read, maintain, and utilize. You’ll be introduced to context managers and decorators. Furthermore, to help you learn how to use functions in practice, you will get to work on case studies and practical programming tasks.

You can learn more about Writing Functions in Python and other in-depth Python courses by clicking here.

The Unix shell is a command-line interpreter that is used to execute the Python code. Its usability is universal – with shell, you can combine different Python apps, automatize your programming tasks, and run your programs on cloud systems and clusters.

In the Introduction to Shell course, you’ll learn to use this command-line interpreter efficiently. You will find out a brief history of the Unix shell and how it has managed to remain one of the industry’s favorites even half a century later.

From a more technical standpoint, you’ll manipulate data. You’ll be using simple tools to complete some complex programming tasks. Unix is all about combining commands, so you’ll become a more efficient programmer with shell.

Finally, you won’t just be working with presets. By the end of the course, you’ll know how to create and combine your own tools that you can reuse in future projects.

The Data Processing in Shell course builds on the knowledge you garnered in the previous course. Here, you’ll be working on specific command-line skills. They will help you optimize your code, save time while working on your projects, and complete data processing with simple command lines.

You’ll learn to use the command line to download data from web servers. You’ll use a number of different tools, like curl and Wget. The csvkit command line library will help you make data previews, filtering, and manipulation processes easier.

This course uses public Spotify datasets, so you’ll get an opportunity to execute your programs using real-world data, providing you with an experience that you’ll be able to replicate in your data engineering projects.

If you’re looking into building cloud-based analytics pipelines, you need to master the art of Bash scripting. Bash is a scripting language that data engineers use for data manipulation and pipeline development. If you have no prior experience with Bash, this course will get you through the basics.

Knowing Bash shells will allow you to execute an entire program with a single command line. In this course, you’ll learn to write simple command-line pipelines, string and numeric variables, and control statements.

By the end of the Introduction to Bash Scripting course, you’ll know how to automate your programs and schedule executions to ensure the processes run smoothly even without your constant supervision.

You might be wondering – aren’t data science and data engineering two different things? They are. However, as a data engineer, you’ll be working closely with data scientists, and the two fields are intertwined.

Unit testing is a necessary part of project development. Testing individual units of the source code is useful to reduce the development time and see which code components actually work as expected. The Unit Testing for Data Science in Python course will teach you how to write a full test suite using Python.

In this course, you will learn how to write and run basic unit tests. Since no code is completely flawless from the beginning, you‘ll learn how to detect and fix bugs. You’ll be able to correctly interpret the test results and know what the current practices of unit testing structure are.

Python is a multi-paradigm language that can be used for object-oriented programming (OOP). This method of programming, where you treat all the data and code as objects, is considered efficient and logical and prepares you to write cleaner, easier-to-follow code.

In the Object-Oriented Programming in Python course, you’ll learn the main principles of OOP and how you can implement them in your projects. Object-oriented programming is easily reusable, ensuring that your future projects are efficient and optimized, and you waste less time writing new lines of code.

This course will provide you with hands-on experience when it comes to developing your own attributes, constructors, and methods. By the time you’ve finished each chapter, you’ll be able to write clean, efficient, and, most importantly, functional code.

To learn more about the Object-Oriented Programming in Python course, you can find our overview here.

The process of developing data pipelines is complex. When you’re just getting started, you often have to do a lot of work manually first to set up your future templates. Airflows can help you accelerate this process – they’re used to automate the scheduling, error handling, and other processes related to your data engineering workflows.

The Introduction to Airflow in Python course will teach you the essentials of working with Apache Airflow. This course is very data engineering-heavy, so it’ll provide you with a good foundation to enter the field.

You’ll learn about the most used data engineering workflows and how Airflow can help reduce the steps it takes to complete them to just one.

You’ll see how to optimize your workflow time by monitoring your processes via Airflow. By the end of the course, you’ll be able to build your own production-quality workflows using Airflow and implement them in practice.

Spark is a tool you’ll often encounter if you’re working with Python. It’s used for working with parallel computation, particularly when dealing with substantial quantities of data. The Introduction to PySpark course will teach you all about using PySpark, a data management package within the Spark toolkit.

In this course, you’ll learn how to use Spark for your data management tasks. You’ll work on reading and writing tables – one of the most common methods of data presentation. Then, you’ll move on to the PySpark tool in particular. Here, you’ll see how to optimize your data queries and filter only the necessary data.

Before wrapping up, you’ll learn a bit about machine learning pipelines and how to develop them on your own. Once you’ve completed this course, you’ll be able to efficiently work with Spark and create your own data models.

You might’ve heard of the acronym AWS – Amazon Web Services. They’re cloud-based services that you can use to optimize your data engineering tasks and reduce the workload of your hardware. In this course, you’ll learn to work with S3, also known as the Simple Storage Service.

Cloud technology is growing increasingly popular. It’s secure, easy to manage, and a lot more lightweight and cost-efficient than maintaining your own in-house servers. So, knowing how to work with cloud services is essential for any good data engineer.

The Introduction to AWS Boto in Python course will provide you with the basics of working with AWS. You’ll learn how to set up the cloud for your data-related projects. The security aspects are covered to ensure you follow the right protocols and don’t compromise your data.

Of course, as with most DataCamp courses, you’ll get to put your skills into practice before you wrap up the course by working on case studies that use real-world data.

This is your second assessment checkpoint and the last stop before we get into the advanced stuff. As you’re already aware, DataCamp offers free assessments with Signal for all users to find their weaknesses and reinforce their strengths with individualized learning plans.

By now, you should be a proficient Python user, capable of working with different data management tools and cloud services. It’s time to freshen up your SQL skills. This assessment will determine your proficiency with SQL when it comes to analyzing data.

You’ll be able to track your process and jump right back into the DataCamp Data Engineer path once you’re ready.

DataCamp Data Engineer Courses: Advanced

There’s only a handful of courses left ahead of us. As we’re entering the more advanced subjects, you’re getting one step closer to turning your dream career in data engineering into reality.

Relational databases are considered to be among the most efficient ways to store data. Such databases are used to organize data into relations – unique tables that are connected to each other via relationships. It's an efficient way to store data that helps you avoid redundancies.

The Introduction of Relational Databases in SQL course will teach you how you can develop such data storage tools for your own projects. You'll be working with real-life data collected during academic research to ensure that you gain experience that you can transfer into your work.

You'll learn how to move data from flat tables into the database and ensure its consistency. In addition to the practical skills, you will also get some tips and tricks to work as an efficient data engineer from day one.

If you've worked your way up through the SQL Fundamentals skill track on DataCamp, you probably know what a great tool this language is when it comes to database management. Database design is one of the core tasks of a data engineer – so, you have to make sure you know what good database design entails.

In the Database Design course, you'll be working with the recommended industry practices to ensure your product offers high-performance data management. You'll learn about two common data processing approaches – OLTP and OLAP. Understanding when they apply best will improve your database design process.

You'll work with advanced data modeling techniques and learn to normalize databases. By the end of the course, you'll be efficient at designing and managing your very own databases that you can adjust based on your business needs.

Scalability is an important aspect of app development. You need to make sure that your product works as efficiently and the user interface is as good on your laptop as it is on your phone screen. This also applies to the development of data management applications – after all, data is everywhere.

In the Introduction to Scala course, you'll learn about this programming language which was developed specifically for scalability. It's a general-purpose programming language that stands out for its conciseness.

You will learn about working with different data types while using Scala. There are several different ways to write code with this language, so there are lots of basics to cover. Once you're done, you'll be fully capable to write a working program code on your own.

You've certainly heard the term Big Data before. It's pretty self-explanatory – Big Data refers to very large quantities of data that various businesses handle on a daily basis. To accommodate Big Data, your job as an engineer is to develop sufficient pipelines.

The Big Data Fundamentals with PySpark will take you back a few steps to refresh the PySpark skills you've acquired. The Spark framework is used for Big Data management thanks to its significantly high speed. In fact, Apache Spark is considered one of the best frameworks for Big Data – and you'll get to test it out yourself.

You will work with different modules, frameworks, and datasets to learn how Big Data management works. You'll have access to a number of real-life data sources that will help you figure out the art of building engines.

When you're working with massive quantities of data, it's more than likely that you won't require to process all of it. Preparing everything manually would take a ridiculous amount of time. Thankfully, Apache Spark is here to help make your life easier.

The Data Cleaning in Apache Spark with Python will introduce you to this process of raw data preparation. It's done to ensure the reliability and quality of data. Your focus is on working with Spark, since this programming language runs faster and requires just one framework to run several complex tasks at once.

By the end of this course, you'll be able to efficiently clean your data files and prepare them for processing. As usual, you'll have an opportunity to use real-life data and start working on some of your first pipelines.

MongoDB is a NoSQL database program used to explore structured data – and the subject of the last course in the DataCamp Data Engineer path. You're going to learn the essentials of MongoDB and how it can help you while searching and analyzing data.

The Introduction to MongoDB in Python course will have you working with flexibly structured data. You'll work on structure and substructure levels to filter and relate your files. You'll see how to match patterns to values.

Structured data analysis can be a time-consuming project. So, you'll be shown some shortcuts without sacrificing data integrity.

By the end of this course, not only will you know how to handle bandwith-related bottlenecks and leverage MongoDB on your servers – you'll also be well on-track to becoming a professional data engineer.

Pricing

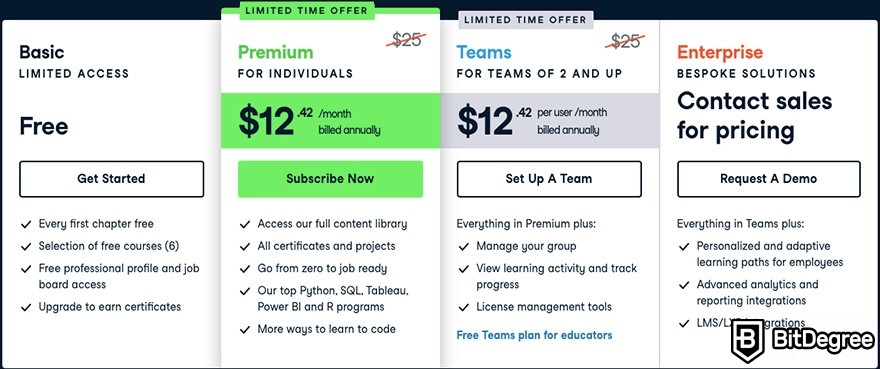

As you’ve seen, some of the courses and assessments in the DataCamp Data Engineer career track can be accessed for free. However, what about the rest of the courses?

Well, if you just want to get a feel for the courses without making a significant commitment, you can check out the first chapter of each course completely for free. That'll give you a taster for things to come. That being said, a couple dozen first chapters won’t turn you into an expert.

Instead, you can start learning properly by subscribing to the DataCamp Premium plan. For $25/month, you’ll be able to access every single course on this career track – and more. The DataCamp catalog offers you more than 350 courses in data science, data engineering, and business analytics.

In addition to the courses, you’ll also be able to take part in projects that use real-life data, check out other career and skill tracks, and even access the certification programs. There’s a lot that you don’t want to miss.

Did you know?

Have you ever wondered which online learning platforms are the best for your career?

Conclusions

So, you’ve got your path towards a career in data engineering covered. Where should you go next?

Well, firstly, if you’ve completed this course track, we’d love to hear about your experience. You can leave your DataCamp Data Engineer review in the comments below.

As for what you can do now, why not stick around with DataCamp? With the Premium plan, you can jump into other career tracks, like the Machine Learning Scientist path.

Or, if you’d like to get more technical, you can check out the DataCamp skill tracks. We’ve got short guides for the SQL Fundamentals and R Fundamentals skill tracks, so you can learn more.

And now – enjoy your journey into data engineering. With so many opportunities ahead of you, you’ll certainly find the right path for you!